Archives

- By thread 5342

-

By date

- June 2021 10

- July 2021 6

- August 2021 20

- September 2021 21

- October 2021 48

- November 2021 40

- December 2021 23

- January 2022 46

- February 2022 80

- March 2022 109

- April 2022 100

- May 2022 97

- June 2022 105

- July 2022 82

- August 2022 95

- September 2022 103

- October 2022 117

- November 2022 115

- December 2022 102

- January 2023 88

- February 2023 90

- March 2023 116

- April 2023 97

- May 2023 159

- June 2023 145

- July 2023 120

- August 2023 90

- September 2023 102

- October 2023 106

- November 2023 100

- December 2023 74

- January 2024 75

- February 2024 75

- March 2024 78

- April 2024 74

- May 2024 108

- June 2024 98

- July 2024 116

- August 2024 134

- September 2024 130

- October 2024 141

- November 2024 171

- December 2024 115

- January 2025 216

- February 2025 140

- March 2025 220

- April 2025 233

- May 2025 239

- June 2025 303

- July 2025 155

-

High-quality switch supplier invites you to cooperate

Dear Info,

We are a leading high-quality switch manufacturer. With many years of industry experience and high-quality products, we serve many partners around the world and are widely praised.

Our core products:

Micro switch: high sensitivity, suitable for electrical appliances, automobiles, industrial equipment, medical and other industries;

Rocker switch: diversified design to meet different needs;

Push button switch: durable, widely used in a variety of industries;

Keyboard switch: comfortable feel, designed for user experience.

Our factory has passed ISO certification and provides fast delivery, OEM/ODM services, and can tailor solutions according to your specific needs.

I hope to have the opportunity to understand your company's needs and explore the possibility of cooperation. If you need samples or quotations, please feel free to contact me and I will provide you with detailed information.

Best regards&Thanks

David Wu

Yueqing Tongda Wire Electric Factory

ADD:No.8 Chuanger Road,Yuqing Bay Port Area, Wenzhou,Zhejiang,325609

Tel: 0086-577 57158583

Mobil: 0086-13780102300

Web: www.chinaweipeng.com

Mail: wdm@tdweipeng.com(If email Failed, pls send to weipeng@tdweipeng.com)

Made-In-China: tongdaswitch.en.made-in-china.com

by "Momen Slama" <momenslama451@gmail.com> - 10:01 - 10 Jun 2025 -

Professional Solutions to Cutting Challenges in the Building Decoration Industry

Dear Info,I'm Xavier from Koocut Cutting Technology (Sichuan) Co., Ltd. Our company has extensive experience in the cutting - tool industry, thanks to the technical accumulation of our parent company over the past two decades.In the building decoration industry, precision and efficiency are of utmost importance. Our PCD cement - fiber - board - processing circular saw blades and TCT color - steel - plate - processing circular saw blades are specifically designed to address the cutting challenges in this field. These blades can cut various building materials with high precision, resulting in smooth edges and minimal waste.Our factory, operating in line with Industry 4.0 standards, features intelligent and flexible production capabilities. This means we can quickly adjust production according to your specific order requirements, whether it's a small - batch customized order or a large - scale standard order. We also have a strict quality control system to ensure that every product leaving our factory meets the highest quality standards.We've already worked with many building decoration service providers globally, helping them improve project efficiency and customer satisfaction. We believe we can do the same for your company. If you have any questions or are interested in our products, please contact me.Best regards,

Contact Information:

WhatsApp:+86 17388372772

Email: xavier@koocut.comKoocut Cutting Technology (Sichuan) Co., Ltd.

by "Beaner Lovely" <beanerlovely@gmail.com> - 09:59 - 10 Jun 2025 -

LIVE from Info-Tech (and what’s actually being said)

LIVE from Info-Tech (and what’s actually being said)

Hi MD Abul,

Greg Benton here, Chief Strategy Officer at Third Stage. Eric couldn’t make it to this year’s Info-Tech LIVE conference, so I’m stepping in to share a few thoughts from the ground.

I’m writing this from a hotel lobby, half-empty coffee in hand, name badge already flipped backward, and that familiar buzz of conference energy all around.

We’re here at Info-Tech LIVE, and like always, it’s impressive.

Screens glowing. AI demos everywhere. Consultants are speed-walking to the next panel. Booths promising transformation in bold letters.

But here’s the thing that always hits me in moments like this:

You can feel the gap between the noise and the real work.Because while the tech looks great on the showroom floor, most of the conversations that matter? They’re happening off to the side. In quiet 1-on-1s. At the coffee stations. In the space between hype and execution.

Earlier today, someone asked me what I’m most excited about in the AI space.

And honestly?

It’s not the tools. It’s the teams finally asking better questions.

Not “What can this new system do?”

But:-

“How do we need to change to actually use this?”

-

“Are our people ready?”

-

“Is this even solving the right problem?”

That’s the shift we’re seeing, the one that actually moves companies forward.

We’ll be here all week, walking the floor, sitting in on sessions, and having the real conversations that don’t fit in a glossy brochure. If you’re here too, come say hi.

And if you’re not, don’t worry. We’ll bring the takeaways back with us.

That’s exactly what our Digital Stratosphere Conference this August is all about. We’re pulling back the curtain on strategy, systems, and what it takes to make AI and digital transformation work in the real world, not just the keynote stage.

Our theme this year is Mission: AI-Possible, and we’d love to see you there.

Use the code "earlybird" for 20% off your ticket(s)!

Best regards,

Greg Benton

Third Stage Consulting 384 Inverness Pkwy Suite Suite #200 Englewood Colorado 80112 United States

You received this email because you are subscribed to Marketing Information from Third Stage Consulting.

Update your email preferences to choose the types of emails you receive.

Unsubscribe from all future emails

by "Greg Benton" <greg.benton@thirdstage-consulting.com> - 09:31 - 10 Jun 2025 -

-

Premium nutritional ingredients you are looking for——in stock in our US warehouse

Dear Info,

Glad to noticed that you are in Dietary Supplements contract manufacturing field in US. Hope this email is helpful for your procurement.

I 'm from Shaanxi Top purity Biotech Ltd. We are a manufacturer of nutritional supplements in China, with a warehouse in California.Our product is factory direct sales, with stable output and favorable prices.

We have been engaged in trading in nutraceutical for over 10 years ,our products have been audited and approved by worldwide reputed customers, such as Glanbia, Nestlé, PIPING ROCK, NBTY, Pharmavite, Now foods, Nutricost, Robinson pharma.

If you want to purchase any Herbs & Botanicals ingredients ,Protein Powders, Probiotics, Enzymes and Specialty products, please feel free to let me know.

Below are our current hot sale products for better check. I believe it will definitely help you save a lot of purchasing costs.

Should you any interest, please feel free to let me know.

Warehouse Add:13735 iroquois Pl,Chino ,CA,91710

Description

Qty (By Kg)

Price(USD/Kg)

Cascara Sagrada Powder

950

9

D-Biotin Tianxin

233

160

Deer Antler Extract 4:1

125

45

Deer Antler powder

275

55

Devil’s Claw root powder

200

8

Diosmin Complex 90% (80%Diosmin&10% Hesperidin)

275

55

Echinacia extract

975

8

Epicatechin 90%

50

200

Fish Collagen

500

18

Forsythia Extract 5-1

350

8

HICA;Alpha-hydroxy-isocaproate Calcium

25

40

Huperzine A 1%

7

500

Inositol -Myo

4500

8

Ipriflavone

100

98

Irish moss powder

300

8

Jambolan Powder

25

10

kale powder

500

5

Ledebouriella Divarciata Extract 5-1

275

8

L-Glutathione Reduced

75

75

Ligusticum Wallichilrhizone Powder

50

8

Maca Extract

225

8

Maca Gelatinized Powder

1000

8

Melatonin

230

72

Milk Thistle Extract 80%

500

30

MK4 1%

81

100

Motherwort Powder

300

5

Mulberry Leaf extract

100

8

Neem Leaf Extract

50

9

Nicotinamide Adenine Dinucleotide 99% (NAD)

300

130

NMN-β-nicotinamide mononucleotide

175

95

Passion Flower Powder

25

8

PHOSPHATIDYLSERINE 20%

25

55

Pterostilbene

20

300

PYRROLOQUINOLINE QUINONE DISODIUM SALT

10

1100

Quercetin Dihydrate powder 95%

700

48

Resveratrol 20%

25

40

Rhodiola Rosea P.E. (Rosavins 3% Salidroside 1%)

75

145

Rutin 95% NF 11

750

28

Saffron Extract 0.3%

25

120

Schisandra Extract 2% HPLC

50

34

Schisandra Extract 4:1

150

8

Schisandra P.E. 2% UV

25

8

Sea -Buckthorn Powder

50

10

Shilajit Extract 20%

100

35

SOD(8000IU )

535

55

Sodium copper chlorophyllin

100

200

Sodium Hyaluronate Haiber

1800

82

Sucralose Techno /jinhe

1800

23

Theobromine 99%

500

47

TUDCA

205

350

Best regards,

Top purity sales team

What's App: 44 7463 099214

Wechat: 17729507767

DL: 1 909-516-8031 / 1 909-678-6521

e-mail: sales3@toppuritybio.com;sales4@sinoingredients.online

by "Biljana Lebowski" <biljanalebowski743@gmail.com> - 05:25 - 10 Jun 2025 -

GRAB YOUR FREE SEAT !!! Microsoft Excel (Basic Level) 28 & 29 July 2025

CLICK HERE TO DOWNLOAD BROCHURE

Please call 012-588 2728

email to pearl-otc@outlook.com

HYBRID PUBLIC PROGRAM

MICROSOFT EXCEL

(BASIC LEVEL)

(** Choose either Zoom OR Physical Session)

Remote Online Training (Via Zoom) &

OTC Training Centre Sdn Bhd Subang, Selangor (Physical)

(SBL Khas / HRD Corp Claimable Course)

Date : 28 July 2025 (Mon) | 9am – 5pm By Siti

29 July 2025 (Tue) | 9am – 5pm . .

INTRODUCTION

This course covers all the essentials of Microsoft Office Excel. Topics covered include the new Flash Fill feature, using formulas and functions, and customizing the interface. Material is also included on how to format text, data, and workbooks; and chart data.

OBJECTIVES

· How to create, open, and save workbooks

· How to enter, select, and delete data

· How to undo and redo

· Using cut, copy, and paste functions

· Inserting rows and columns

· How to merge and split cells

· Using Paste Special, find and replace, and hiding and unhiding cells

· How to use basic formulas

· How to learn basic and advanced functions

· How to run spell check.

· How to use the sort and filter tools to organize data

· How to use AutoFill, Flash Fill, AutoSum, AutoComplete, and AutoCalculate

· Various ways to format and work with text

· Various methods to chart data

· Ways to view and distribute a workbook

· Ways to customize the interface

OUTLINE OF WORKSHOP

Module 1: The Basics

- Creating a New Workbook

- Parts of a Workbook

- Saving a Workbook

- Opening a Workbook

Module 2: Your First Workbook

- Selecting Data

- Entering and Deleting Data

- Using Undo and Redo

- Using Cut, Copy, and Paste

Module 3: Working with Data

- Inserting Rows and Columns

- Merging and Splitting Cells

- Moving Cells

- Using Paste Special

- Using Find and Replace

- Hiding and Unhiding Cells

Module 4: Using Basic Excel Tools

- Understanding Cell References and Formulas

- Using Basic Formulas

- Using Basic Functions

- Using Advanced Functions

- Using Spell Check

- Using Sort and Filter

Module 5: Using Timesaving Tools

- Using AutoFill

- Using Flash Fill

- Using AutoSum

- Using AutoComplete

- Using AutoCalculate

Module 6: Formatting Text

- Changing the Font Face, Size, and Color

- Applying Text Effects

- Applying Borders and Fill

- Using the Font Tab of the Format Cells Dialog

- Clearing Formatting

Module 7: Formatting Data

- Wrapping Text

- Changing the Size of Rows and Columns

- Adjusting Cell Alignment

- Changing Text Direction

- Changing Number Format

Module 8: Formatting the Workbook

- Using Cell Styles

- Formatting Data as a Table

- Changing the Theme

- Inserting Page Breaks

- Adding a Background

Module 9: Charting Data

- Creating Sparklines

- Inserting Charts

Module 10: Viewing, Printing, and Sharing Your Workbook

- Using Views

- Saving a Workbook as PDF or XPS

- Printing a Workbook

Module 11: Customizing the Interface

- Changing Ribbon Display Options

- Customizing the Quick Access Toolbar

- Hiding and Showing Ribbon Tabs

- Creating Custom Ribbon Tab

- Resetting Interface Changes

** Certificate of attendance will be awarded for those who completed the course

ABOUT THE FACILITATOR

Siti

Microsoft Office Specialist (MOS)

Siti started her career as an Information Technology Lecturer in few local colleges and universities back in year 1999. In her 8 years’ experience as a lecturer, she picks up various discipline in IT related subjects. She also involved in giving Microsoft Office Applications training to various companies.

Since 20March 2006 till present, Siti Suriani decided for a career change. She moved to IT related training. As a Training Consultant, she focused more on Microsoft Office Applications training. She has facilitated training programs in link with broad-ranging groups of training institutes and clients. She is familiar and proficient with Microsoft Office Applications and during her training she will address the day to day issues faced by employees in today’s corporate environment.

In year 2007 till 2008 Siti Suriani had been appointed as one of the Master Trainer for The Teaching and Learning of Science and Mathematics in English (Pengajaran dan Pembelajaran Sains dan Matematik Dalam Bahasa Inggeris - PPSMI). Her role as a Master Trainer was to give training to all the trainers representing different states around Malaysia on how to deliver the training to all the teachers in various schools in Malaysia.

Aside to giving training, Microsoft Malaysia has engaged her to share her expertise on how to fully maximize the usage of Microsoft Office Applications since year 2008 till current. She had done many workshops around Malaysia for major Microsoft Malaysia customers mostly focusing on the Tips and Tricks and also best practices.

Siti was involved as a Handyman in Handyman Project under Shell Global Solutions, Malaysia since 2008 till 2011. To be given the opportunity to give One-to-one consultation with the client by looking, asking and solve problem related to the data provided by the clients. Examples of topics covered for Handyman sessions are E-mail and Calendar, Standard & Mobile Office, Archiving & Back-ups, NetMeeting, Livelink, Live Meeting? and Microsoft Office Applications.

Nov 2010 to Feb 2011 she was being given another golden opportunity by ExxonMobil Malaysia to be the lead trainer in the Migration from XME to GME project to train almost 3000 staffs. This training also includes Microsoft Office 2010 and Windows 7.

Academic Qualification

1999 – Bachelor of Computer Science (Honours) · Computing (Single Major) - USM

2001 – Master of Science · Distributed Computing - UPM

Working Experience

· Cybernetics International College of Technology · Lecturer · (June 1999 to May 2002)

· MARA University of Technology (UiTM Seri Iskandar) · Lecturer · (June 2002 to July 2003)

· Cosmopoint College of Technology · Lecturer · (September 2005 to March 2006)

· Iverson Associates Sdn Bhd · Senior Training Consultant · (March 2006 to February 2011)

· Info Trek Sdn Bhd · Senior Training Consultant· (February 2011 to April 2017)

· Fulltime Senior Training Consultant · (May 2017 to present)

Professional Certification

· Microsoft Certified Application Specialist for Office Excel 2007

· Microsoft Certified Application Specialist for Office PowerPoint 2007

· Microsoft Certified Application Specialist for Office Word 2007

· Microsoft Office Specialist for Office Excel 2016

· Microsoft Office Specialist for Office Word 2016

· PSMB Certified Trainer

Skills Expertise

Microsoft Office Excel version 95, 97, 2000, 2002, 2003, 2007, 2010, 2013, 2016, 2019, Office 365

Microsoft Office PowerPoint version 95, 97, 2000, 2002, 2003, 2007, 2010, 2013, 2016, 2019, Office 365

Microsoft Office Word version 95, 97, 2000, 2002, 2003, 2007, 2010, 2013, 2016, 2019, Office 365

Microsoft Office Project version 2003, 2007, 2010, 2013, 2016

Microsoft Office Outlook version 2000, 2002, 2003, 2007, 2010, 2013, 2016, 2019, Office 365

Microsoft Office Access version 97, 2000, 2002, 2003, 2007, 2010, 2013, 2016, 2019

Microsoft Office Workshops (Microsoft Malaysia)

· Bahagian Teknologi Maklumat, Pejabat SUK Negeri Selangor

· DIGI Telecommunications Sdn Bhd

· Halal Industry Development Corporation

· Institut Jantung Negara Sdn Bhd

· Lembaga Tabung Haji

· Newfield Sarawak Malaysia Inc

· PERKESO

· Securities Commission Malaysia

· Sunway Medical Centre

· Sutera Harbour Resort - The Pacific Sutera Hotel

· BSN

(SBL Khas / HRD Corp Claimable Course)

TRAINING FEE

14 hours Remote Online Training (Via Zoom)

RM 1,296.00/pax (excluded 8% SST)

2 days Face-to-Face Training (Physical Training)

RM 1,850.00/pax (excluded 8% SST)

Group Registration: Register 3 participants from the same organization, the 4th participant is FREE.

(Buy 3 Get 1 Free) if Register before 18 July 2025. Please act fast to grab your favourite training program!We hope you find it informative and interesting and we look forward to seeing you soon.

Please act fast to grab your favorite training program!

Please call 012-588 2728 or email to pearl-otc@outlook.com

Do forward this email to all your friends and colleagues who might be interested to attend these programs

If you would like to unsubscribe from our email list at any time, please simply reply to the e-mail and type Unsubscribe in the subject area.

We will remove your name from the list and you will not receive any additional e-mail

Thanks

Regards

Pearl

by "pearl@otcsb.com.my" <pearl@otcsb.com.my> - 03:06 - 10 Jun 2025 -

Cost-Effective Performance

Dear Info,

Balancing performance and cost is critical in the UAV industry. At Micro Magic Inc, we provide high-precision inertial sensors that deliver exceptional value without compromising on quality. Our cost-effective solutions are trusted by leading drone manufacturers worldwide.

Interested in learning more? Let’s arrange a call to discuss further.

Best regards,

Young

Account Manager

Micro Magic Inc

Skypy: manot27

Whatsapp: +8618151836753

Website: www.memsmag.com

by "Karaeski Martirosov" <martirosovkaraeski804@gmail.com> - 12:55 - 10 Jun 2025 -

How Lyft Uses ML to Make 100 Million Predictions A Day

How Lyft Uses ML to Make 100 Million Predictions A Day

In this article, we’ll look at how Lyft built an architecture to accomplish this requirement and the challenges they faced.͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ Forwarded this email? Subscribe here for moreDatabase Benchmarking for Performance: Virtual Masterclass (Sponsored)

Learn how to accurately measure database performance

Free 2-hour masterclass | June 18, 2025

This masterclass will show you how to design and execute meaningful tests that reflect real-world workload patterns. We’ll discuss proven strategies that help you rightsize your performance testing infrastructure, account for the impact of concurrency, recognize and mitigate coordinated omission, and understand probability distributions. We will also share ways to avoid common pitfalls when benchmarking high-performance databases.

After this free 2-hour masterclass, you will know how to:

Tune your query and traffic patterns based on your database and workload needs

Measure how warm-up phases, throttling, and internal database behaviors impact results

Correlate latency, throughput, and resource usage for actionable performance tuning

Report clear, reproducible benchmarking results

Disclaimer: The details in this post have been derived from the articles/videos shared online by the Lyft Engineering Team. All credit for the technical details goes to the Lyft Engineering Team. The links to the original articles and videos are present in the references section at the end of the post. We’ve attempted to analyze the details and provide our input about them. If you find any inaccuracies or omissions, please leave a comment, and we will do our best to fix them.

Hundreds of millions of machine learning inferences power decisions at Lyft every day. These aren’t back-office batch jobs. They’re live, high-stakes predictions driving every corner of the experience from pricing a ride to flagging fraud, predicting ETAs to deciding which driver gets which incentive.

Each inference runs under pressure and a single-digit millisecond budget. This translates to millions of requests per second. Dozens of teams, each with different needs and models, are pushing updates on their schedules. The challenge is staying flexible without falling apart.

Real-time ML at scale breaks into two kinds of problems:

Data Plane pressure: Everything that happens in the hot path. This includes CPU and memory usage, network bottlenecks, inference latency, and throughput ceilings.

Control Plane complexity: This is everything around the model. Think of aspects like deployment and rollback, versioning, retraining, backward compatibility, experimentation, ownership, and isolation.

Early on, Lyft leaned on a shared monolithic service to serve ML models across the company. However, the monolith created more friction than flexibility. Teams couldn’t upgrade libraries independently. Deployments clashed and ownership blurred. Small changes in one model risked breaking another, and incident investigation turned into detective work.

The need was clear: build a serving platform that makes model deployment feel as natural as writing the model itself. It had to be fast, flexible, and team-friendly without hiding the messy realities of inference at scale.

In this article, we’ll look at how Lyft built an architecture to accomplish this requirement and the challenges they faced.

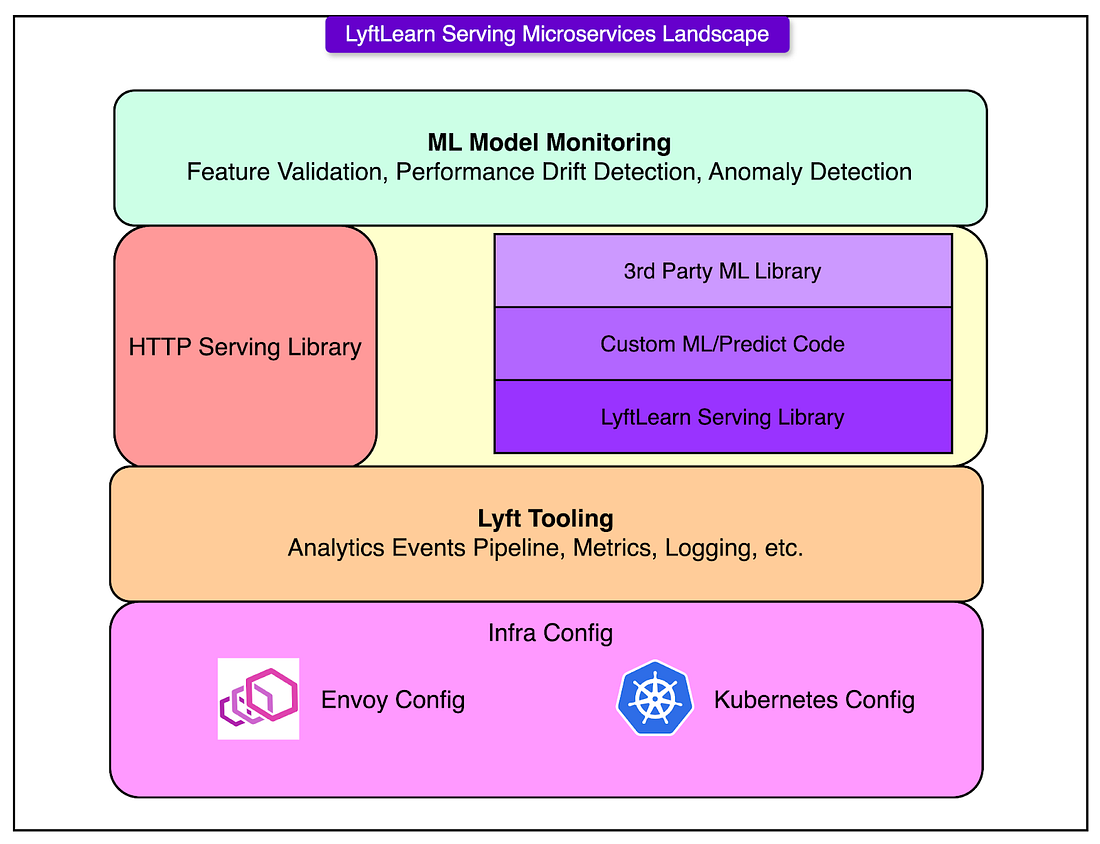

Architecture and System Components

LyftLearn Serving doesn’t reinvent the wheel. It slots neatly into the microservices foundation already powering the rest of Lyft. The goal wasn’t to build a bespoke ML serving engine from scratch. It was to extend proven infrastructure with just enough intelligence to handle real-time inference, without bloating the system or boxing teams.

At the core is a dedicated microservice: lightweight, composable, and self-contained. Each team runs its instance, backed by Lyft's service mesh and container orchestration stack. The result: fast deploys, predictable behavior, and clean ownership boundaries.

Let’s break down this architecture flow diagram:

HTTP Serving Layer

Every request to a LyftLearn Serving service hits an HTTP endpoint first. This interface is built using Flask, a minimalist Python web framework. While Flask alone wouldn’t scale to production workloads, it’s paired with Gunicorn, a pre-fork WSGI server designed for high concurrency.

To make this stack production-grade, Lyft optimized the setup to align with Envoy, the service mesh that sits in front of all internal microservices. These optimizations ensure:

Low tail latency under high request volume.

Smooth connection handling across Envoy-Gunicorn boundaries.

Resilience to transient network blips.

This layer keeps the HTTP interface thin and efficient, just enough to route requests and parse payloads.

Core Serving Library

This is where the real logic lives. The LyftLearn Serving library handles the heavy lifting:

Model loading and unloading: Dynamically brings models into memory from saved artifacts

Versioning: Tracks and manages different model versions cleanly

Shadowing: Enables safe testing by running inference on new models in parallel, without affecting live results

Monitoring and logging: Emits structured logs, metrics, and tracing for each inference request

Prediction logging: Captures outputs for later audit, analytics, or model debugging

This library is the common runtime used across all teams. It centralizes the “platform contract” so individual teams don’t need to re-implement the basics. But it doesn’t restrict customization.

Custom ML/Predict Code

The core library is dependency-injected with team-owned inference logic. Every team provides two Python functions:

def load(self, file: str) -> Any: # Custom deserialization logic for the trained model ... def predict(self, features: Any) -> Any: # Custom inference logic using the loaded model ...Source: Lyft Engineering Blog

This design keeps the platform flexible. A team can use any model structure, feature format, or business logic, as long as it adheres to the basic interface. This works because the predicted path is decoupled from the transport and orchestration layers.

Third-Party ML Library Support

LyftLearn Serving makes no assumptions about the ML framework. Whether the model uses TensorFlow, PyTorch, LightGBM, XGBoost, or a home-grown solution, it doesn’t matter.

As long as the model loads and predicts through Python, it’s compatible. This lets teams:

Upgrade to newer framework versions without coordination.

Use niche or experimental libraries.

Avoid vendor lock-in or rigid SDKs.

Framework choice becomes a modeler’s decision, not a platform constraint.

Integration with Lyft Infrastructure

The microservice integrates deeply with Lyft’s existing production stack:

Metrics and tracing plug into the company-wide observability pipeline.

Logs and prediction events feed into central analytics systems.

Kubernetes handles service orchestration and autoscaling.

Envoy service mesh provides secure, discoverable network communication.

This alignment avoids duplicating effort. Teams inherit baseline reliability, visibility, and security from the rest of Lyft’s infrastructure, without needing to configure it themselves.

Isolation and Ownership Principles

When dozens of teams deploy and serve ML models independently, shared infrastructure quickly becomes shared pain. One broken deploy can block five others. A single library upgrade triggers weeks of coordination and debugging turns into blame-shifting. That’s what LyftLearn Serving was built to avoid.

The foundation of its design is hard isolation by repository, not as a policy, but as a technical boundary enforced at every layer of the stack.

One Repo, One Service, One Owner

Every team using LyftLearn Serving gets its own GitHub repository. This repo isn’t just for code, but it defines the entire model-serving lifecycle:

The service code includes custom load() and predict() logic.

The configuration files for deployment and orchestration.

The integration hooks for CI/CD, monitoring, and metrics.

There’s no central repository to manage and no shared runtime to coordinate. If a team needs five models, they can choose to host them in one repo or split them across five.

Independent Deploy Pipelines

Each repo comes with its deploy pipeline, fully decoupled from others. This includes:

Staging and production environments.

CI jobs that run model self-tests and linting.

Version tagging and release promotion.

If one team pushes broken code, it doesn’t affect anyone else. If another needs to hotfix a bug, they can deploy instantly. Isolation removes the need for cross-team coordination during high-stakes production changes.

Runtime Isolation via Kubernetes and Envoy

LyftLearn Serving runs on top of Lyft’s Kubernetes and Envoy infrastructure. The platform assigns each team:

A dedicated namespace in the Envoy service mesh

Isolated Kubernetes resources (pods, services, config maps)

Customizable CPU, memory, and replica settings

Team-specific autoscaling and alerting configs

This ensures that runtime faults, whether it’s a memory leaks, high CPU usage, or bad deployment. A surge in traffic to one team’s model won’t starve resources for another. A crash in one container doesn’t bring down the serving infrastructure.

Tooling: Config Generator

Getting a model into production shouldn’t mean learning multiple configuration formats, wiring up runtime secrets, or debugging broken deploys caused by missing database entries.

To streamline this, LyftLearn Serving includes a Config Generator: a bootstrapping tool that wires up everything needed to go from zero to a working ML serving microservice. Spinning up a new LyftLearn Serving instance involves stitching together pieces from across the infrastructure stack:

Terraform for provisioning cloud infrastructure.

YAML and Salt for Kubernetes and service mesh configuration.

Python is used for defining the serving interface.

Runtime secrets for secure credential access.

Database entries for model versioning or feature lookups.

Envoy mesh registration for service discovery.

Expecting every ML team to hand-craft this setup would be a recipe for drift, duplication, and onboarding delays. The config generator collapses that complexity into a few guided inputs.

The generator runs on Yeoman, a scaffolding framework commonly used for bootstrapping web projects, but customized here for Lyft’s internal systems.

A new team running the generator walks through a short interactive session:

Define the team name.

Specify the service namespace.

Choose optional integrations (for example, logging pipelines and model shadowing).

The tool then emits a fully-formed GitHub repo with:

Working example code for the load() and predict() functions.

Pre-wired deployment scripts and infrastructure configs.

Built-in test data scaffolds and CI setup.

Hooks into monitoring and observability.

Once the repo is generated and the code is committed, the team gets a functioning microservice, ready to accept models, run inference, and serve real traffic inside Lyft’s mesh. Teams can iterate on the model logic immediately, without first untangling infrastructure.

Model Self-Testing System

Model serving can often drift when a new dependency sneaks in, slightly changing output behavior. For example, a training script gets updated, but no one notices the prediction shift. Or, a container upgrade silently breaks deserialization. By the time someone spots the drop in performance, millions of bad inferences have already shipped.

To fight this, LyftLearn Serving introduces a built-in Model Self-Testing System. It’s a contract embedded inside the model itself, designed to verify behavior at the two points that matter most: before merge and after deploy.

Every model class defines a test_data property: structured sample inputs with expected outputs:

class SampleModel(TrainableModel): @property def test_data(self) -> pd.DataFrame: return pd.DataFrame([ [[1, 0, 0], 1], # Input should predict close to 1 [[1, 1, 0], 1] ], columns=["input", "score"])Source: Lyft Engineering Blog

This isn’t a full dataset. It’s a minimal set of hand-picked examples that act as canaries. If a change breaks expected behavior on these inputs, something deeper is likely wrong. The test data travels with the model binary and becomes part of the serving lifecycle.

Two checkpoints that matter are as follows:

During Deployment Runtime

After a model loads inside a LyftLearn Serving instance, it immediately runs predictions on its test_data. The results:

Get logged and surfaced in metrics dashboards.

Trigger alerts if predictions drift too far from expected.

Provide an immediate signal of runtime integrity.

This catches subtle breakages caused by environment mismatches. For example, a model trained in Python 3.8 but deployed into a Python 3.10 container with incompatible dependencies.

During Pull Requests

When a developer opens a PR in the model repo, CI kicks in. It performs the following activities:

Loads the new model artifacts.

Runs predictions on the stored test_data.

Compares outputs against previously known-good results.

If the outputs shift beyond an acceptable delta, the PR fails, even if the code compiles and the service builds cleanly. The diagram below shows a typical development flow:

Inference Request Lifecycle

A real-time inference system lives in the milliseconds between an HTTP request and a JSON response. That tiny window holds a lot more than model math. It’s where routing, validation, prediction, logging, and monitoring converge.

LyftLearn Serving keeps the inference path slim but structured. Every request follows a predictable, hardened lifecycle that allows flexibility without sacrificing control.

Here’s a step-by-step on how the request gets served:

Request hits the Flask endpoint: The service exposes an /infer route via Flask, backed by Gunicorn workers. Envoy handles the upstream routing. The incoming payload is parsed and handed off to the core serving library.

The core library retrieves the model: Using the model_id, the runtime looks up the corresponding model. If the model isn’t already loaded into memory, the system calls the team-supplied load() function and loads it on demand. This is where versioning logic, caching, and model lifecycle controls kick in. There’s no global registry and no shared memory. Each service owns and manages its models.

Input validation and preprocessing: Before running inference, the platform performs sanity checks on the features object, such as type or shape validation, required field presence, and optional model-specific hooks. This step guards against malformed inputs and prevents undefined behavior in downstream logic.

User-defined predict() runs the inference: Once the inputs are deemed valid, the system hands control to the custom predict() function written by the modeler. This function converts inputs into the expected format and calls the underlying ML framework (for example, LightGBM, TensorFlow) to return the prediction output. The predict() function is hot-path code. It runs millions of times per day. Its performance and correctness directly affect latency and downstream decisions.

Logs, metrics, and analytics: After the output is generated, the platform automatically logs the request and response for debugging and audit trails. It also emits latency, throughput, and error rate metrics and triggers analytics events that flow into dashboards or real-time monitoring. This observability layer ensures every inference can be traced and every service behavior can be measured.

Response returned to the caller: Finally, the result is packaged into a JSON response and returned via Flask. From request to response, the entire path is optimized for speed, traceability, and safety.

Conclusion

Serving machine learning models in real time isn’t just about throughput or latency. It’s about creating systems that teams can trust, evolve, and debug without friction.

LyftLearn Serving didn’t emerge from a clean slate or greenfield design. It was built under pressure to scale, to isolate, and to keep dozens of teams moving fast without stepping on each other’s toes.

Several lessons surfaced along the way, and they’re worth understanding:

“Model” means different things to different people. A serialized object, a training script, and a prediction endpoint all fall under the same label. Without clear definitions across tooling and teams, confusion spreads fast.

Documentation is part of the product. If teams can’t onboard, debug, or extend without asking the platform team, the system doesn’t scale. LyftLearn Serving treats docs as first-class citizens.

Once a model is deployed behind an API, someone, somewhere, will keep calling it. Therefore, stability is a requirement for any serving system that expects to live in production.

Trade-offs aren’t optional. Seamless UX conflicts with flexible composability. Structured pipelines clash with custom workflows. Every decision makes something easier and something else harder. The trick is knowing who the system is really for and being honest about what’s being optimized.

Power users shape the platform. Build for the most advanced, most demanding teams first. If the platform meets their needs, it’ll likely meet everyone else's. If not, it won’t scale past the first few adopters.

Prefer boring tech when it works. Stability, debuggability, and operational maturity are key aspects to consider.

LyftLearn Serving is still evolving, but its foundations hold. It doesn’t try to hide complexity, but it isolates it. Also, it enforces a contract around how the models behave in production.

References:

SPONSOR US

Get your product in front of more than 1,000,000 tech professionals.

Our newsletter puts your products and services directly in front of an audience that matters - hundreds of thousands of engineering leaders and senior engineers - who have influence over significant tech decisions and big purchases.

Space Fills Up Fast - Reserve Today

Ad spots typically sell out about 4 weeks in advance. To ensure your ad reaches this influential audience, reserve your space now by emailing sponsorship@bytebytego.com.

Like

Comment

Restack

© 2025 ByteByteGo

548 Market Street PMB 72296, San Francisco, CA 94104

Unsubscribe

by "ByteByteGo" <bytebytego@substack.com> - 11:38 - 10 Jun 2025 -

New: The smartest way to run HR and payroll

New: The smartest way to run HR and payroll

.png?width=1200&upscale=true&name=Email%20Header_EN%20(3).png)

Hi MD,

Growing your team shouldn’t mean juggling different tools for every country and every employee type. But for most companies, that’s the reality: up to 73% of time is lost to manual work, while payroll errors cost thousands.

Introducing Remote HRIS:

Effortless HR. Accurate payroll. All in one place.Remote HRIS brings all your employee data into one place, from hiring and onboarding to leave and time off, expenses, payroll, and more. You can:

- Hire and onboard employees and contractors anywhere

- Run payroll and HR in the same system

- Automate manual tasks with AI and no-code workflows

- Launch performance reviews with the new Remote Perform: a better way to manage feedback, goals, and reviews

And we're just getting started. Coming soon:

- A smarter way to manage compensation

- Automated onboarding workflows

- AI-powered engagement surveys

- ...and much, much more

You received this email because you are subscribed to

News & Offers from Remote Europe Holding B.V

Update your email preferences to choose the types of emails you receive.

Unsubscribe from all future emailsRemote Europe Holding B.V

Copyright © 2025 Remote Europe Holding B.V All rights reserved.

Kraijenhoffstraat 137A 1018RG Amsterdam The Netherlands

by "Remote Team" <hello@remote-comms.com> - 09:21 - 10 Jun 2025 -

Test our US ZIP code data - No signup required

Test our US ZIP code data - No signup required

Explore our ZIP code data quality, Master address validation across any platform, Multi-language feature enhancement, Monthly changes and A sea adrift: Beautiful and borderless.👋 Hi there! In this edition of The Geodata Insider, I'm excited to share a new way you can explore our ZIP code and city data in the US.

2:30 minute read

📮 Explore our ZIP code data—no signup needed

📈 Master address validation across any platform

🛠️ Multi-language feature enhancement

🔎 Monthly changes

🌊 A sea adrift: Beautiful and borderless

Explore our US ZIP code data—no signup needed

Search any US ZIP code. Get the states, cities, population, accurate coordinates, and standardized formatting. It’s the same data that powers operations at Amazon, MercuryGate, and DB Schenker across 247 countries.

.png?width=100&upscale=true&name=Group%2054%20(1).png)

Master address validation across any platform

Ready to eliminate address errors and boost data quality? Here is a list of step-by-step guides to ensure streamlined address validation.

Don't see your platform? Reply to this email with the system you're missing.

Smarter Language selection

Selecting the right language for users in multilingual countries like Canada and Switzerland has always been challenging.

GeoPostcodes multi-language data exports now include intelligent language recommendations by location, identifying the locally preferred language(s) for each area.

This ensures you always use the most appropriate language for your users' locations, improving data accuracy and user experience.

Monthly changes

Full Postal & Street updates

Australia, Netherlands

Postal database patches

(small changes)

Argentina (Postcodes),

Croatia (Postcodes), Italy (Postcodes), Philippines (Administrative divisions)

Postal boundary patches (small changes)

China, Spain

Administrative boundaries patches (small changes)

Denmark

For a detailed changelog, take a look at the CSV file.

A sea adrift: Beautiful and borderless

Forget everything you know about oceans—there’s one sea that plays by none of the rules. The Sargasso Sea doesn’t touch a single shore. No beaches, no cliffs, no coastline.

Instead, it floats in the middle of the Atlantic, held together by four swirling currents.

Inside this watery loop, a golden jungle of Sargassum seaweed drifts on the surface.

It’s a nursery for turtles, an eel breeding ground, and a pit stop for whales and tuna.The Sargasso is now home to the North Atlantic Garbage Patch: thousands of plastic pieces per square kilometre.

A place that exists without borders… and reminds us what happens when we cross them. Follow us on LinkedIn for more geographical facts like this!

Kind regards,

Jerome & the GeoPostcodes team

PS: Interested in previous Monthly Product Updates? Read here.

GeoPostcodes, Bld Bischoffsheim 15, 1000 Bruxelles, Belgium

by "Jérôme from GeoPostcodes" <jerome@geopostcodes.com> - 06:35 - 10 Jun 2025 -

Excelling as a leader: Decision making

Make the choice Email 2 of 7

Faster, better decisions

In the previous session, we explored the mindsets and behaviors of growth leaders. In this second email, we'll explore how to cultivate careful—yet bold—decision making. Today’s leaders are faced with hard choices and more pressure than ever. Here are some ways to set yourself up for more successful decisions.

Executives today spend about 40 percent of their time making decisions—and 60 percent say that time is poorly used. The opportunity costs of these inefficiencies are hard to reconcile: for the typical Fortune 500 company, about 530,000 days of managers’ time is potentially squandered each year. That’s equivalent to around $250 million in wages annually.

Much of this time is spent in meetings, says McKinsey senior partner Aaron De Smet. But “most meetings are designed to not make a decision,” he says. So the inefficiency perpetuates itself.

It doesn’t have to be this way. Check out these insights to cultivate your skill as a decision maker under any circumstances.

This email contains information about McKinsey’s research, insights, services, or events. By opening our emails or clicking on links, you agree to our use of cookies and web tracking technology. For more information on how we use and protect your information, please review our privacy policy.

You received this email because you subscribed to “The McKinsey Publishing Guide to excelling as a leader.”

Copyright © 2025 | McKinsey & Company, 3 World Trade Center, 175 Greenwich Street, New York, NY 10007

by "McKinsey Publishing Guides" <publishing@email.mckinsey.com> - 03:17 - 10 Jun 2025 -

Your VIP Golf Experience Awaits — Ready to Tee Off in Style?

Tee times, accommodation, and transport all taken care of... Hello Sir/Madam,

Are you ready to take your golf game to the next level? At VIP Golf Concierge, we specialise in arranging luxury golf trips at unbeatable prices. Whether you’re dreaming of playing legendary courses in the UK and Ireland or jetting off to the sun-drenched fairways of Spain, Portugal, or beyond — we have you covered!

Here’s what we offer:

- Access to top-tier courses across Britain, Ireland, Europe, and worldwide

- Tee times, accommodation, and transport all taken care of

- A personalised, VIP service that the big tour operators simply can’t match

- But the best part? Book any overseas golf trip with us and you’ll get absolutely free UK golf at renowned venues so you can warm up your game (imagine playing The Belfry, Close House, Celtic Manor, and more – on us!).

Our VIP spots are limited and fill up fast. Don’t miss out on your chance for a truly unforgettable golfing experience.

Ready to get started? Reply to this email or call us on +44(0)7492 189 785 to begin planning your dream golf getaway.

See you on the fairway!

Best regards,

Samantha Taylor

Tour Leader

+44(0)7492 189 785

by "Samantha - VIP Golf Concierge" <info@vipgolfbookings.co.uk> - 03:02 - 10 Jun 2025 -

What are the fashion industry’s biggest themes in 2025?

On McKinsey Perspectives

Growth in emerging markets

Brought to you by Alex Panas, global leader of industries, & Axel Karlsson, global leader of functional practices and growth platforms

Welcome to the latest edition of Only McKinsey Perspectives. We hope you find our insights useful. Let us know what you think at Alex_Panas@McKinsey.com and Axel_Karlsson@McKinsey.com.

—Alex and Axel

•

Fashion’s 2025 outlook. Amid consumers’ unease, supply chain concerns, and shifts in global trade, the fashion industry is facing a challenging year. Despite these risks, McKinsey’s latest State of Fashion research reveals that there are still opportunities for brands to capture. That’s according to Senior Partner Gemma D’Auria, who discusses the research’s key findings in a recent episode of The McKinsey Podcast. Fashion leaders can help their businesses grow by using AI and AI-led tools to engage directly with customers and offer personalized shopping experiences, both online and in stores, D’Auria explains. McKinsey’s previous State of Fashion research found that up to 25% of AI’s potential in fashion can enhance the industry’s creative side.

—Edited by Belinda Yu, editor, Atlanta

This email contains information about McKinsey's research, insights, services, or events. By opening our emails or clicking on links, you agree to our use of cookies and web tracking technology. For more information on how we use and protect your information, please review our privacy policy.

You received this email because you subscribed to the Only McKinsey Perspectives newsletter, formerly known as Only McKinsey.

Copyright © 2025 | McKinsey & Company, 3 World Trade Center, 175 Greenwich Street, New York, NY 10007

by "Only McKinsey Perspectives" <publishing@email.mckinsey.com> - 01:28 - 10 Jun 2025 -

Register: Driving innovation with custom Joule skills and agents

Join us on July 1st for a tour of the Joule Studio experience.

Meet Joule Studio: Drive Innovation with AI Agents & Joule Skills

Tuesday July 1st, 2025

[3 PM ET | 3 PM CET | 3 PM SGT]AI tools are taking productivity to new levels. But why stop at out-of-the-box solutions?

Join us for an SAP Webcast featuring Joule Studio in SAP Build and see how to create custom AI agents and Joule skills tailored to your business workflows. Get a look at the developer experience and the tools that help you customize and extend Joule’s capabilities. You’ll discover the essentials of building and managing AI agents that are ready to work for your business.

You’ll also learn more about:

- Understanding Joule skills and AI agents

- Building with Joule Studio for SAP and non-SAP systems

- Identifying the right agentic AI use cases

Kind regards,

The SAP Build team

Contact us

See our complete list of local country numbers

SAP (Legal Disclosure | SAP)

This e-mail may contain trade secrets or privileged, undisclosed, or otherwise confidential information. If you have received this e-mail in error, you are hereby notified that any review, copying, or distribution of it is strictly prohibited. Please inform us immediately and destroy the original transmittal. Thank you for your cooperation.

You are receiving this e-mail for one or more of the following reasons: you are an SAP customer, you were an SAP customer, SAP was asked to contact you by one of your colleagues, you expressed interest in one or more of our products or services, or you participated in or expressed interest to participate in a webinar, seminar, or event. SAP Privacy Statement

This email was sent to info@learn.odoo.com on behalf of the SAP Group with which you have a business relationship. If you would like to have more information about your Data Controller(s) please click here to contact webmaster@sap.com.

This offer is extended to you under the condition that your acceptance does not violate any applicable laws or policies within your organization. If you are unsure of whether your acceptance may violate any such laws or policies, we strongly encourage you to seek advice from your ethics or compliance official. For organizations that are unable to accept all or a portion of this complimentary offer and would like to pay for their own expenses, upon request, SAP will provide a reasonable market value and an invoice or other suitable payment process.

This e-mail was sent to info@learn.odoo.com by SAP and provides information on SAP’s products and services that may be of interest to you. If you received this e-mail in error, or if you no longer wish to receive communications from the SAP Group of companies, you can unsubscribe here.

To ensure you continue to receive SAP related information properly, please add sap@mailsap.com to your address book or safe senders list.

by "The SAP Build team" <sap@mailsap.com> - 12:20 - 10 Jun 2025 -

Grab your spot: Join our AI Readiness for SMBs webinar

Build the right AI strategy for your business and learn how to leverage AI effectively to scale your operations.

Live webinar

Prepare for AI and Accelerate Your Business Growth

July

15 11:30 AM SGT

Live webinar

Prepare for AI and Accelerate Your Business Growth

Register now Many small and medium businesses are already investing in AI, and growing SMBs are nearly twice as likely to do so. It's clear that AI is a top priority for growth.

Join our upcoming webinar to learn:

• How to assess your business's AI readiness

• Identify use cases for AI

• Develop an AI strategy

• Discover how AI in action can help scale your business

Speakers

Jenn Sei

SMB Product Marketing Manager

Salesforce

Marcus Conway

Solution Engineer

Salesforce© 2025, Salesforce, Inc.

Salesforce.com 2 Silom Edge, 14th Floor, Unit S14001-S14007, Silom Road, Suriyawong, Bangrak, Bangkok 10500

General Enquiries: +66 2 430 4323

This email was sent to info@learn.odoo.com

Manage Preferences or Unsubscribe | Privacy Statement

Powered by Salesforce Marketing Cloud

by "Salesforce Webinars" <salesforce@mail.salesforce.com> - 11:35 - 9 Jun 2025 -

Precision Plastic Injection Molds | OEM/ODM Solutions | Bairun Molding

Dear Info,

At Dongguan Bairun Mould Co., Ltd, we specialize in high-performance plastic injection molds and precision parts for industries requiring strict tolerances and complex designs. Our services include insert molding, multi-cavity molds, and CNC machining, tailored to your unique specifications.

✅ Key Advantages:

10+ years of expertise in automotive, electronics, and consumer goods

ISO-certified production with 100% QC checks

Custom solutions: material selection (ABS, PP, PC), surface finishes, and rapid prototyping

Request a free design review or explore our portfolio: [Your Website Link]. Let’s turn your concepts into reality.

Best regards,

Bruce Tu

Sales Manager

GR Precision Mold Limited

by "wendy" <wendy@grpmould.com> - 11:29 - 9 Jun 2025 -

Let Us Handle Your Air Freight Needs with Care and Expertise From China To Mexico

Dear Mr/MsYou can throw coins into a fountain and wish for:Fast transit

Low rates

Flawless communication

Or...You can email us.We’re not magical, but we do answer. Fast. And honestly.From China to Mexico ― no wishes needed.

Pls kindly find the following the Air rate for general cargo for FOB:ASLG FOB Rate - Week24 2025 AIR EXPORT Airline station Route Weight breaks FRQ T/T Remark Carrier Org Dest 100+ 300+ 500+ 1000+ HU PEK MEX PEK-MEX $4.65 $4.65 $4.65 $4.65 Daily Direct 1cbm>300kgs stackable BA/AF PEK MEX PEK-LHR/CDG-MEX $5.05 $5.05 $5.05 $4.95 Daily 4-5Days 1cbm>300kgs stackable NH PEK MEX PEK-NRT-MEX $5.05 $5.05 $5.05 $4.95 Daily 2-3Days 1cbm>300kgs stackable CZ PVG NLU PVG-NLU $5.35 $5.35 $5.35 $5.35 D1356 Direct 1cbm>300kgs stackable K4 CGO NLU CGO-NLU $5.35 $5.35 $5.35 $5.35 D246 Direct 1cbm>300kgs stackable 5Y PVG GDL PVG-GDL $5.50 $5.50 $5.50 $5.50 D3 Direct 1cbm>300kgs stackable 5Y XMN NLU XMN-NLU $5.35 $5.35 $5.35 $5.35 D247 Direct 1cbm>300kgs stackable CA/CZ SZX MEX SZX-MEX $4.85 $4.85 $4.85 $4.85 D246 Direct 1cbm>300kgs stackable 5Y HKG NLU/GDL HKG-NLU/GDL $6.20 $6.20 $6.20 $6.20 TBA Direct Battery cargo CX HKG NLU/GDL HKG-NLU/GDL $6.20 $6.20 $6.20 $6.20 D24567 Direct Battery cargo CV HKG NLU/GDL HKG-NLU/GDL $6.20 $6.20 $6.20 $6.20 D1235 Direct Battery cargo Pls noted that you have ready cargo,I will check the special air rate for case by case.Hope the above is helpful for you,if any progress,pls feel free to let me know in time.

Best Regards

Vic Yang

Overseas Departments | Airsupply Logistics Limited

**Focus on international air cargo service, welcome for checking with me any time.**A: Floor 23th Bldg 13 South Park Baoneng Science and Technology Industrial Park,No.1 Qingxiang Road,Longgang Shenzhen,China

B: SZX/CAN/HKG/CSX/PVG/TSN/PEK

WCA ID: 104615 RA:28426 NVOCC: 09626

发件人: vic07@air-supply.biz发送时间: 2025-02-19 12:23收件人: info主题: Let Us Handle Your Air Freight Needs with Care and Expertise From China To MexicoDear Mr./Ms.

We know life’s busy―so when you need to move your goods from China To Mexico, you want it fast, secure, great service and great rate.

Well, guess what? We’ve got your back!

Pls kindy find the following the Air rate for general cargo for FOB:ASLG FOB Rate - Week8 2025 AIR EXPORT Airline station Route Weight breaks FRQ T/T Remark Carrier Org Dest 100+ 300+ 500+ 1000+ HU PEK MEX PEK-MEX $3.06 $3.06 $2.72 $2.72 Daily Direct 1cbm>300kgs stackable BA/AF PEK MEX PEK-LHR/CDG-MEX $3.06 $3.06 $2.72 $2.72 Daily 4-5Days 1cbm>300kgs stackable NH PEK MEX PEK-NRT-MEX $3.06 $3.06 $2.72 $2.72 Daily 2-3Days 1cbm>300kgs stackable CZ PVG NLU PVG-NLU $4.00 $4.00 $4.00 $4.00 D146 Direct 1cbm>300kgs stackable K4 CGO NLU CGO-NLU / / / / D246 Direct 1cbm>300kgs stackable 5Y PVG GDL PVG-GDL $4.00 $4.00 $4.00 $4.00 D3 Direct 1cbm>300kgs stackable 5Y XMN NLU XMN-NLU / / / / D247 Direct 1cbm>300kgs stackable CA/CZ SZX NLU SZX-NLU $3.00 $3.00 $2.85 $2.85 D246 Direct 1cbm>300kgs stackable 5Y HKG NLU/GDL HKG-NLU/GDL $6.10 $6.10 $6.10 $6.10 TBA Direct Battery cargo CX HKG NLU/GDL HKG-NLU/GDL $6.10 $6.10 $6.10 $6.10 D24567 Direct Battery cargo CV HKG NLU/GDL HKG-NLU/GDL $6.10 $6.10 $6.10 $6.10 D1235 Direct Battery cargo

Pls noted that you have ready cargo,I will check the special air rate for case by case.Hope the above is helpful for you,if any progress,pls feel free to let me know.We are eager to explore potential collaboration and support your logistics requirements. Pls don’t hesitate to reach out for more details.

Best Regards

Vic Yang

Overseas Departments | Airsupply Logistics Limited

**Focus on international air cargo service, welcome for checking with me any time.**A: Floor 23th Bldg 13 South Park Baoneng Science and Technology Industrial Park,No.1 Qingxiang Road,Longgang Shenzhen,China

B: SZX/CAN/HKG/CSX/PVG/TSN/PEK

WCA ID: 104615 RA:28426 NVOCC: 09626

发件人: vic07@air-supply.biz发送时间: 2025-01-02 17:22收件人: info主题: Let Us Handle Your Air Freight Needs with Care and Expertise From China To MexicoDear Mr./Ms.

Happy New Year on 2025!!!Imagine this: Your critical cargo glides effortlessly from China’s busiest airports and lands precisely on schedule, handled with precision and care every step of the way, and efficient transportation with airlines.

My name is Vic from Airsupply, where we specialize in providing expert logistics solutions tailored to the needs of international trade.At Airsupply, we make this vision a reality, Here’s how we roll out the red carpet for your shipments:

Our Services:

- Extensive Network: Direct/Transfer routes from major Chinese airports (HKG/SZX/CAN/PVG/PEK/CGO) to MX/US/CA and Latin America, partnering with leading carriers (CZ/CA/HU/NH/OZ/K4/5Y/FX/CX/CV etc...).

- Global Certifications: IATA-certified, JCtrans Golden Member, WCA-approved.

- Strategic Expertise: Master loader at HKG Airport with exclusive airline advantages (CX/CV/5Y/FX/M7/OZ/KE/LO etc...).

- DG Cargo Handling: Safe and compliant transport of hazardous materials (Class 2/3/4/5/6/8/9) with IATA DGR approval.

- Integrated Logistics: Inland trucking, door-to-door DDU/DDP delivery, and seamless customs clearance for air and sea shipments.

- Value-Added Services: Cargo insurance, charter services, project cargo handling, custom brokerage, and trade show logistics expertise.

At the same time, we also need your company to provide us with the following services:

- Air/Sea Freight:Efficient transport from your country to China or other global ports.

- Inland Trucking/Delivery: Including DDU&DDP for door-to-door services.

- Customs Clearance:Seamless import and export customs clearance for both Air&Sea.

We look forward to exploring collaboration opportunities and supporting your logistics needs. Please feel free to contact me for more information.

Thank you for considering Airsupply. We hope to contribute to your global success.

If you need to learn more about us, please watch our YouTube video:https://youtu.be/euPxeTaVxv0

When you do not desire to receive future emails from us,pls let us know in time, and we will remove you from our mailing list. Thanks.

Best Regards

Vic Yang

Overseas Departments | Airsupply Logistics Limited

**Focus on international air cargo service, welcome for checking with me any time.**A: Floor 23th Bldg 13 South Park Baoneng Science and Technology Industrial Park,No.1 Qingxiang Road,Longgang Shenzhen,China

B: SZX/CAN/HKG/CSX/PVG/TSN/PEK

WCA ID: 104615 RA:28426 NVOCC: 09626

by "vic07@air-supply.biz" <vic07@air-supply.biz> - 11:26 - 9 Jun 2025 -

Introduction to Precision Metal Processing Solutions from Zhuolida

Dear Info,

This's Zhuolida-etching,sales manager from Zhuolida, We are one of the biggest Metal processing manufactures in China.

I got to know that you are interested in an Precision Metal Parts, our company has an extensive experience on

such kind of automotive spring plates, gaskets, and automotive oil filter mesh.

We have precision metal etching, precision electroforming, laser cutting, surface plating multi-combination process,

is China's largest multi-process combination of metal parts processing manufacturer.

We have worked for a long time with customers like Kyocera and Auto Toyotafor such products.

Best Regards

Zhuolida-etching

Project Manager

Shenzhen Zhuolida Electronics Co.,Ltd.

WhatsApp:+8618938693450/+860755-27088292

Add: Building A3, Huafa Industrial Park, Fuyong Town, Fuyuan Road, Fuyong Town, Baoan District, Shenzhen,China.

by "zhuolida-etching" <zhuolida-etching@zld-waimao.com> - 08:25 - 9 Jun 2025 -

High-Quality Quartz Products for Your Business Needs

Dear Info,

I hope you’re doing well. I’m [karensun], and I represent [SouthEast Quartz ], a leading manufacturer specializing in high-quality quartz products.

We offer a wide range of products including quartz sheets, tubes, crucibles, glass tubes, slides, boats, rods, and more, used across industries such as semiconductor, solar energy, chemicals, medical devices, and scientific instruments. Our products are well-regarded for their purity, durability, and performance.

With advanced technology, strict quality control, and a strong reputation in both domestic and international markets, we serve clients in over 20 provinces in China and export to regions like Korea, Southeast Asia, Europe, and the Americas.

If you’re interested in discussing how our quartz products can meet your needs, please feel free to reach out. We look forward to the opportunity to work with you.

Thanks and best regards

karensun

SouthEast Quartz Products Co., Ltd

Mob:+86-135 8528 6180

Email: karen.sun@dnquartz.com

Website:www.dnquartz.com

by "Mamaclay Kalua" <mamaclaykalua49@gmail.com> - 07:47 - 9 Jun 2025 -

¡Tu equipo esta invitado a una clase gratuita de ventas!

Hola, buen día:

Fue un gusto platicar con tu equipo que muy amablemente me compartió este correo porque vio que podría aportar mucho para su empresa, les agradezco mucho la apertura.

Me permito escribirte porque estoy convencido de que lo que hacemos puede aportar muchísimo valor al área comercial de la empresa.Seré breve:

Me gustaría coordinar una demo gratuita, una clase virtual en vivo de unos 25 minutos, pensada para equipos comerciales que quieren mejorar su tasa de cierres con técnicas prácticas y de impacto inmediato.Durante esta sesión, además de mostrar lo que hacemos, los participantes se llevan ideas que pueden aplicar desde el primer día. No es una presentación de ventas, es una experiencia real de lo que brindamos.

Entendí que no estaba en sus manos el tomar esta decisión, agradecería mucho si pudieras ayudarme a canalizar esta propuesta con la persona adecuada (tal vez el responsable del área comercial o quien lidere al equipo de ventas).

Te dejo aquí un enlace con más información y algunos casos reales de empresas con las que ya estamos colaborando:

Sitio web: https://factorstudios.io/

Pagina de historias de éxito: https://www.campamentodeventa.com/casos-de-exito

Instagram César (CEO del área de capacitaciones corporativas): https://www.instagram.com/cesarjorqueraq/

Instagram Tino (Fundador de la universidad del closer): https://www.instagram.com/tino.mossu/

Instagram de Teo (Fundador del área de implementación de vendedores en empresas):

https://www.instagram.com/teotinivelli/

by "FactorStudios" <ventasfactorstudios@gmail.com> - 02:12 - 9 Jun 2025 -

Our New Book, Mobile System Design Interview, Is Now Available

Our New Book, Mobile System Design Interview, Is Now Available

Our new book, Mobile System Design Interview, is available on Amazon!͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ ͏ Forwarded this email? Subscribe here for moreOur new book, Mobile System Design Interview, is available on Amazon!

Book author: Manuel Vicente Vivo

𝐖𝐡𝐚𝐭’𝐬 𝐢𝐧𝐬𝐢𝐝𝐞?

- An insider's take on what interviewers really look for and why.

- A 5-step framework for solving any mobile system design interview question.

- 7 real mobile system design interview questions with detailed solutions.

- 24 deep dives into complex technical concepts and implementation strategies.

- 175 topics covering the full spectrum of mobile system design principles.

𝐓𝐚𝐛𝐥𝐞 𝐎𝐟 𝐂𝐨𝐧𝐭𝐞𝐧𝐭𝐬

Chapter 1: Introduction

Chapter 2: A Framework for Mobile System Design Interviews

Chapter 3: Design a News Feed App

Chapter 4: Design a Chat App

Chapter 5: Design a Stock Trading App

Chapter 6: Design a Pagination Library

Chapter 7: Design a Hotel Reservation App

Chapter 8: Design the Google Drive App

Chapter 9: Design the YouTube app

Chapter 10: Mobile System Design Building Blocks

Quick Reference Cheat Sheet for MSD Interview

If you have any questions, please leave a comment.

Like

Comment

Restack

© 2025 ByteByteGo

548 Market Street PMB 72296, San Francisco, CA 94104

Unsubscribe

by "ByteByteGo" <bytebytego@substack.com> - 11:37 - 9 Jun 2025